Inventing The AI Lens Feature

A breakthrough AI feature that lets users point to any UI object on screen and engage an AI assistant with full visual context, transforming how humans interact with complex data.

Organization: Cisco Role: Inventor, Design Lead

About

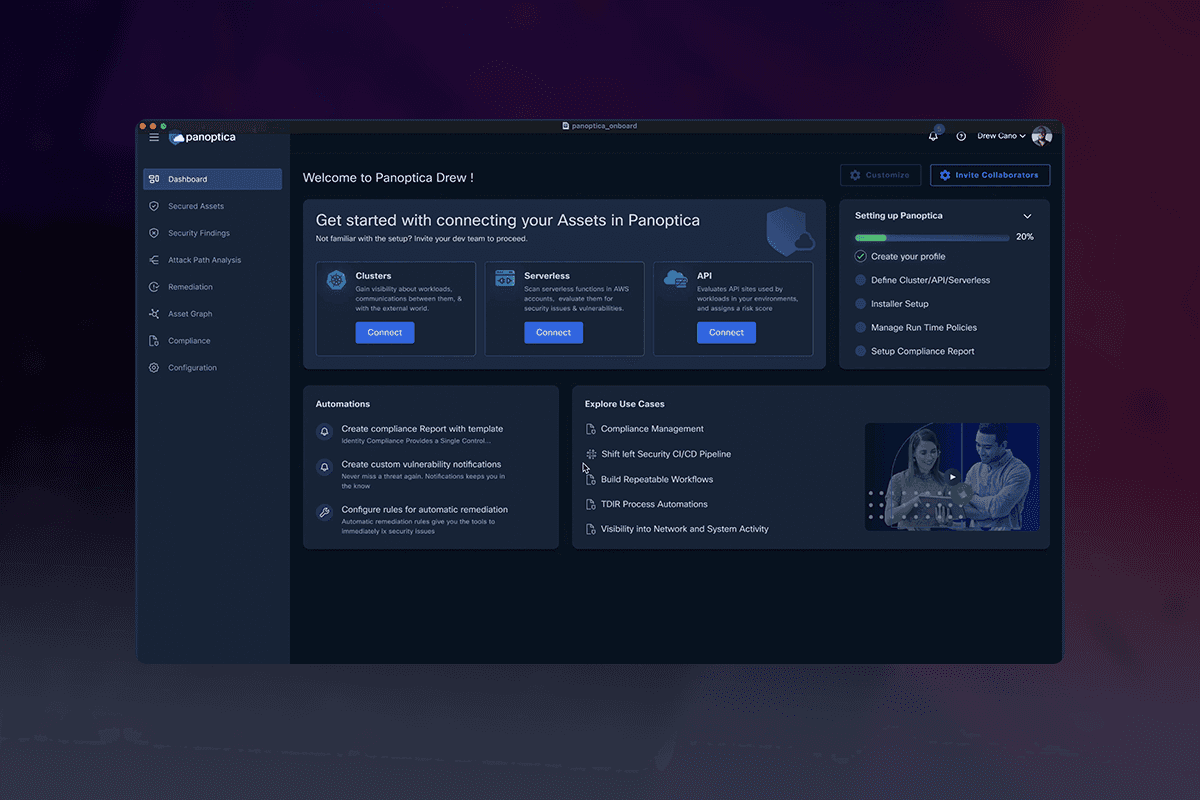

AI “Lens” is a breakthrough AI feature designed to augment user interactions with any UI object visible on the screen. It provides an AI assistant that comprehends and interacts based on the context of the screen elements, significantly enhancing user workflows and task completion rates.

In the same way humans interact by pointing and discussing, AI Lens works in the same way, giving a new relationship to how humans interact with AI assistants to discuss complex data.

Three Foundational Principles

Lens operates on three foundational principles:

- Co-Work with Context: The AI should act like a colleague, understanding context across platforms.

- Trust but Verify: Users can challenge the AI’s responses, promoting trustworthiness.

- Point and Ask: Users can point to any object on the screen to interact with the AI, similar to asking a colleague questions about specific items.

Challenge

How do we design human-agent interfaces that are consistent, comprehensible and scalable across products?

Creating Lens involved overcoming several complex hurdles. The primary challenge was to design an AI system that could accurately understand and interact with diverse UI objects across different platforms and applications.

The AI needed to provide context-aware assistance in real-time, maintain an intuitive user experience, and build user trust through verifiable interactions. Additionally, multi-selection and relational understanding between different UI objects added layers of complexity to the project.

Point. Click. Context.

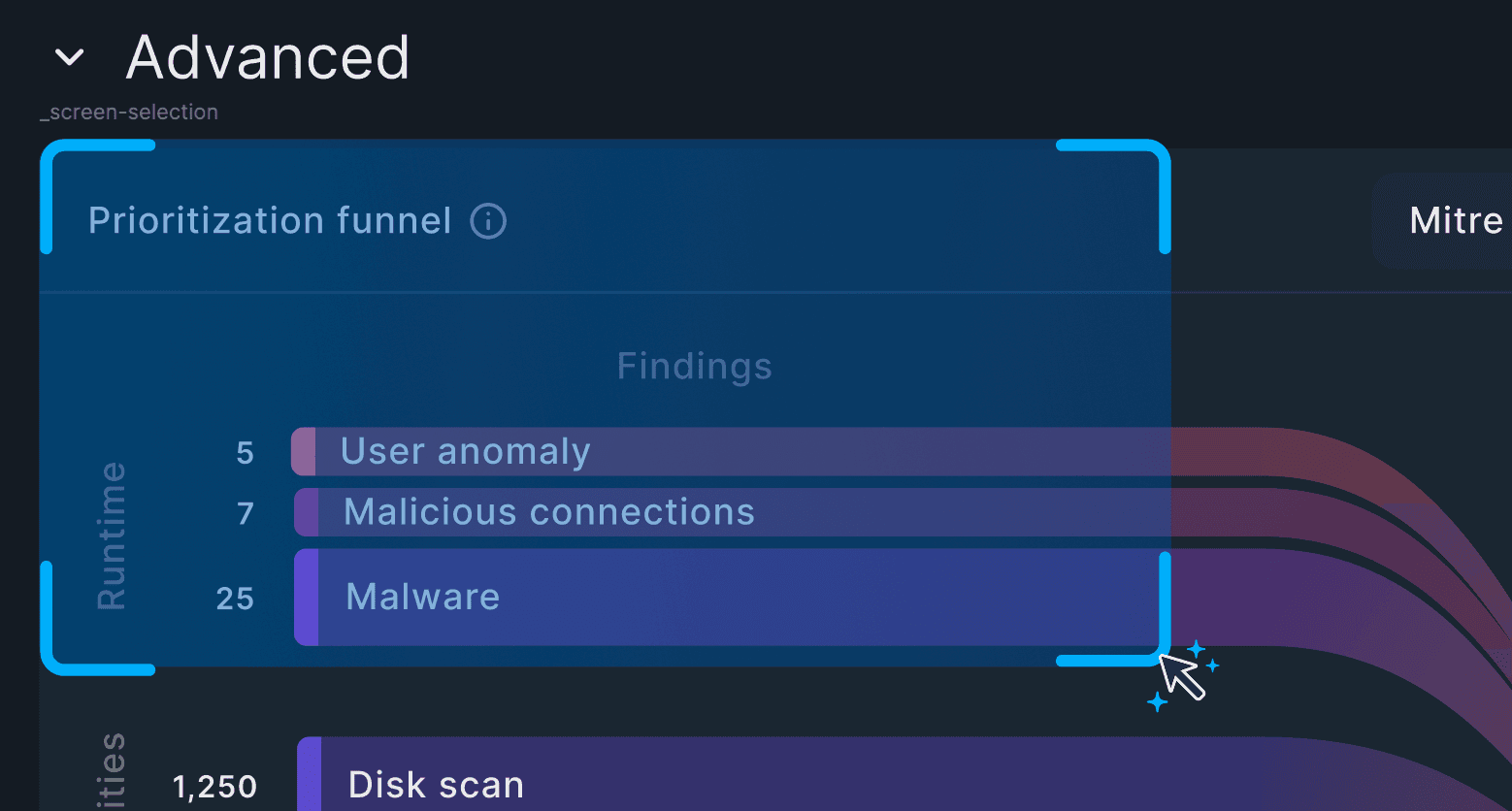

Contextual Selection

Using the select tool the user drags over any visual area of the software product to focus the AI Assistant on the object

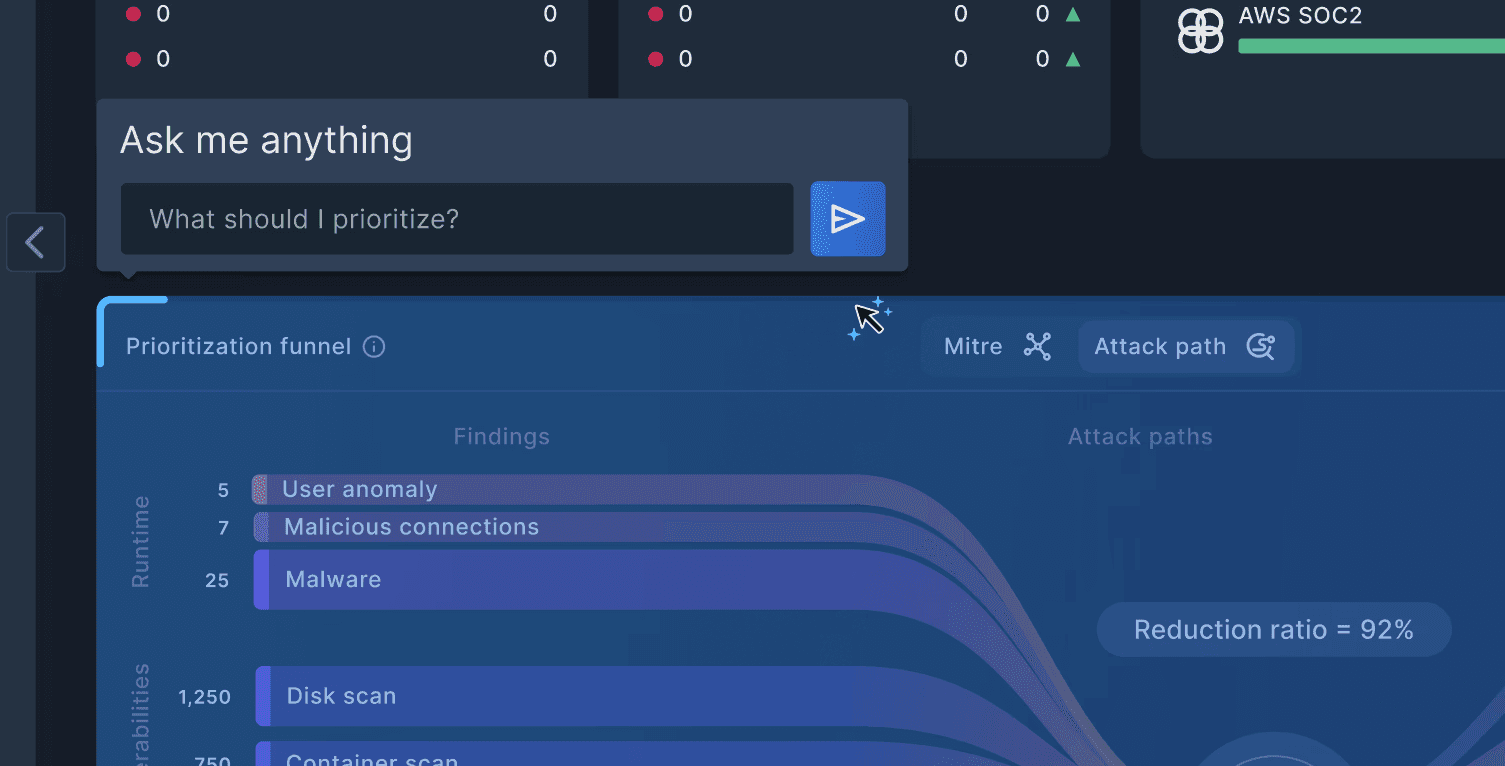

Contextual Prompt

Once an area is selected the user can ask the AI anything in reference to that object and get a contextual answer

Contextual Response

The response is directly related to the visual prompt

Process

We initiated a design discovery process which enabled a systematic exploration and validation of ideas, ensuring each phase, from discovery to release, was grounded in evidence and iterative improvement.

- Discovery and Hypothesis: Collaborated across teams to understand unique business reasons, user landscapes, and industry challenges. Defined the hypothesis to test.

- Prototyping: Created varying fidelity prototypes to test the hypothesis with users, illustrating the intended narrative.

- Validation: Conducted user interviews and collected feedback continuously to refine the hypothesis and the prototype.

- User Story Mapping: Used workshops to incorporate user feedback and validate the story map with engineering teams, guiding development priorities.

- Implementation and Testing: Integrated prototypes into the real environment, gathering immediate feedback and iterating rapidly.

- Final Release: Launched the feature based on rigorous testing and validation to ensure it met the user’s needs and the business objectives.