When AI Needs a Human: What Car Buying Reveals About the Real World

Lately, my work has me thinking a lot about what it really means to build useful AI agents, especially in the context of designing systems where humans and machines operate effectively together.

That’s what caught my attention about Delivrd. It’s a car-buying concierge service that, at first glance, looks like it could be automated. It collects preferences, gathers data, negotiates, and presents options.

Sounds like an agent, right?

But Delivrd isn’t an agentic process. It’s a real person with deep car sales experience, someone who’s been on the other side of the deal and knows how dealerships operate from the inside. That background makes a difference when navigating ambiguity, dealing with inconsistent systems, and getting straight answers from salespeople who often withhold key information.

And that disconnect is exactly why I’m writing this.

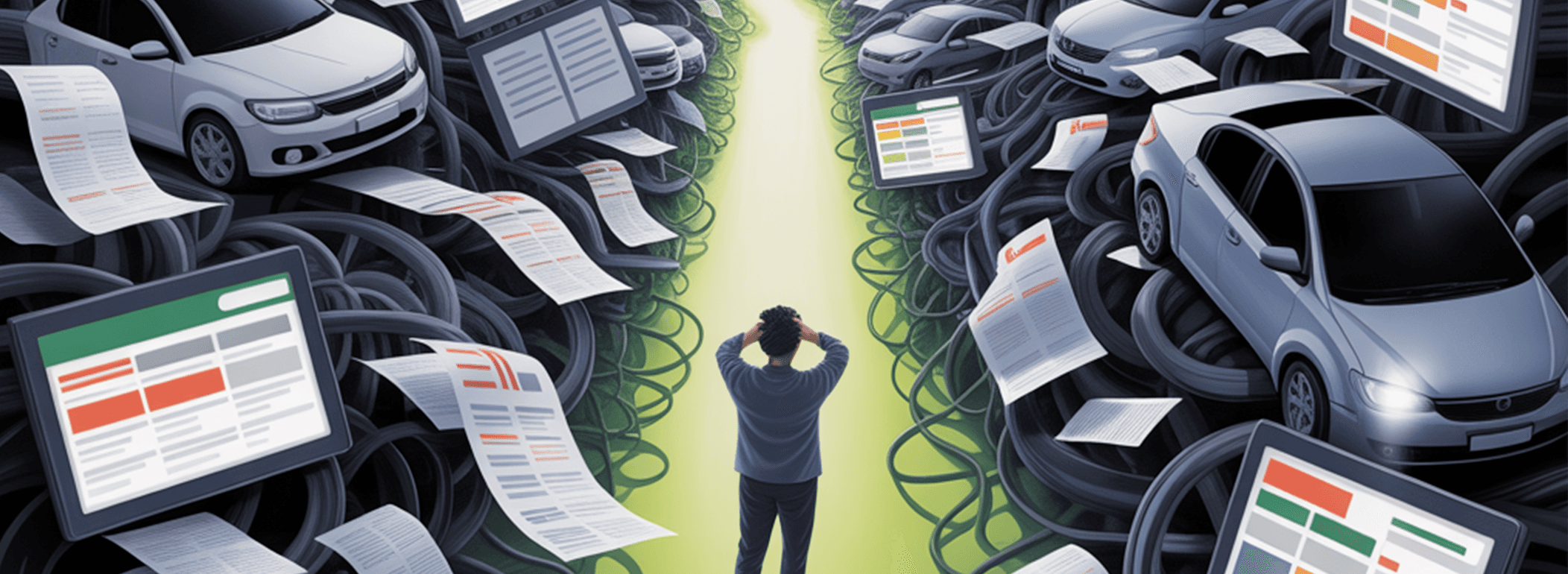

The car buying process is a sharp illustration of where the abstraction of AI agents breaks down. It looks agent-friendly with structured inputs, and repeatable steps. But behind that flow is a deeply human mess.

For anyone working on agent design, it’s a revealing case study, because the process simply wouldn’t work if you tried to automate it with AI today.

What Delivrd Actually Does

When a user contacts Delivrd, the process looks something like this:

- Collect requirements: Budget, model preferences, location, timing

- Search public inventory: Cars.com, Autotrader, dealer sites

- Screen for quality: VIN lookups, accident history, pricing outliers

- Contact dealerships: Phone calls, emails, persistent follow-up

- Negotiate price: They ask for “out-the-door” pricing and push for better terms

- Summarize options: Clear report to the buyer with best choices

- Coordinate next steps: Delivery, paperwork, scheduling test drives

This is a classic agent pipeline, right?

The Fantasy: How AI Agents Could Handle It

You could imagine a system built with a few LLMs, a rules engine, and API access to inventory sites, and we’d likely break it down into distinct AI agents:

- Search Agent pulls current listings based on constraints.

- Filtering Agent runs VINs, flags red flags, checks market price deltas.

- Negotiation Agent sends emails to sales managers asking for best prices.

- Summarizing Agent packages the findings into a simple dashboard.

- Scheduling Agent books appointments or delivery.

And sure, with decent tools and some fine-tuning, you could probably automate a lot of this today.

So why hasn’t that happened?

The Reality: Where It Falls Apart

- Data isn't trustworthy. Public inventory often lies. Cars listed aren't actually available. Prices don't include fees. Discounts aren't visible unless you ask.

AI has no way to validate this unless it calls a human. - Dealerships aren't built for agents. Most dealers won't respond to a bot. They barely respond to people. They rely on friction, urgency, and emotional pressure to close.

An AI trying to play by the rules will be ignored. - Communication is messy. Sales reps switch constantly. Emails go unread. Phones matter. Tone matters. Urgency matters.

The act of a human calling with a confident voice still beats any automated interaction in this context. - AI has no authority. "I'm representing a buyer ready to move today" means something when a human says it.

An AI saying the same thing is just spam. - No incentive to change. Dealers profit from confusion. They don't want a clean, API-accessible pricing system. The current model is extractive by design.

Agents disrupt that, which makes them a threat, not a customer.

What This Teaches Us About Agents in the Real World

Delivrd isn’t an agent, it’s a human navigating the kind of workflow we often imagine automating with AI.

And if you really want to understand this human element, just check out Delivrd’s YouTube channel where Founder Tomi Mikula can be seen haggling with car salespeople over the phone, navigating tone, leverage, and pushback in real time.

So, while my developer colleagues will remind me the data parsing and structured reasoning are solvable problems here. The parts that matter most still aren’t. Human behavior is messy, inconsistent, and intentionally opaque.

Until that changes, agents might help you find a car. But doing something as humanly complex as closing a deal still takes someone who can navigate ambiguity, read intent, and manage dynamic conversations, skills no system has mastered (yet).

This isn’t just about car buying. Any process shaped by interpersonal communication, opaque information, or shifting incentives will continue to be the hardest areas for an agentic system to tackle.